I was more than a little intrigued recently when the need for a key management system cropped up for one of our Data capabilities. Key management solutions tend to be a particularly obscure part of the data world, hidden away, taken for granted in companies with established and complex datawarehousing solutions, and rarely needed in young companies.

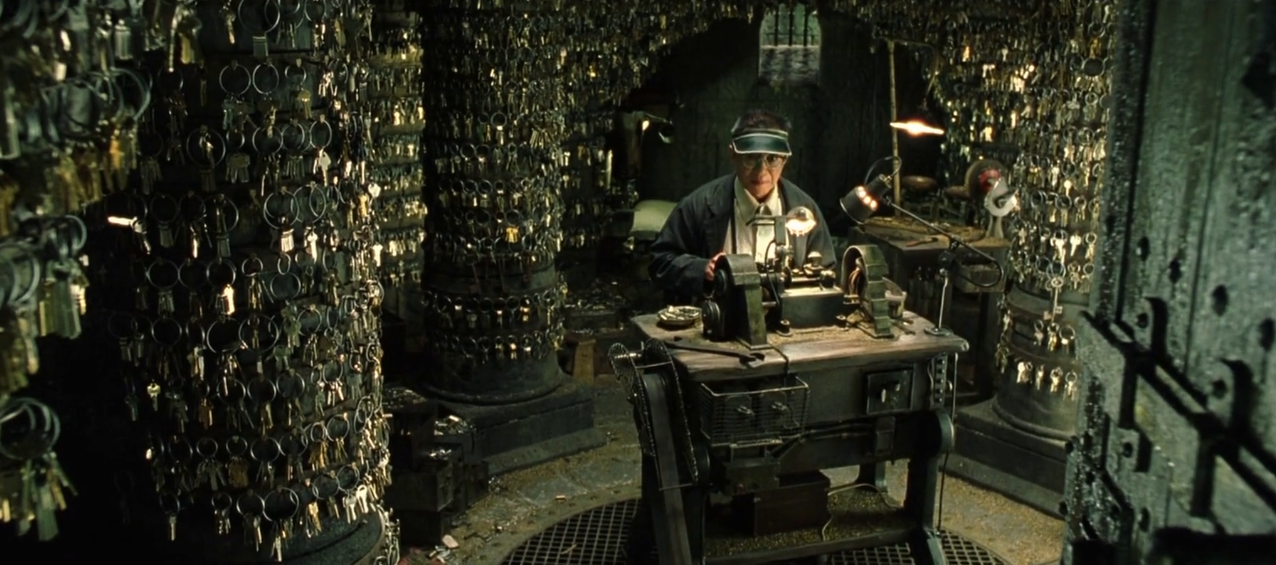

In Data, mentions of keys tend to elicit thoughts of primary keys, foreign keys, and surrogate keys. We are of course talking about these keys – but also much, much more. Take for example, the Keymaker program in The Matrix Reloaded. Yes, a key is unique, it fits just one door, but it’s also a link, a doorway, to a different location in the matrix.

The genius is in knowing which key leads to which location, which keys are closely located to each other, and being able to plot a journey through an entire world through clever selection of keys. This genius ends up being the knowledge embedded in the keymaker program (and less so the physical keys). You’d need a rather sophisticated Keymaker program to reliably store and reproduce this knowledge.

How does this relate to Data capabilities and why is it so intriguing?

I mentioned earlier that sophisticated key management solutions tend to be rare in younger companies.

- A younger company has relatively fewer systems, and tends to have manageable levels of disconnect between systems, and/or relatively reasonable integration between systems (either by design or by human glue 1 ).

- Older companies will have a larger number of systems participating in a process. eg, multiple ordering systems, provisioning, billing and revenue management systems. Optimising a process becomes a multi-system, labour intensive exercise requiring high levels of planning, strategising and substantial knowledge from technical and business SMEs.

Older companies, as they attempt to optimise a process, will typically identify a common thing for which they’d like to unify or standardise a process. For example, enable customer self service for Product X, Y, Z.

- Yet Product X, Y and Z are not easily identifiable in the multiple systems participating in the process.

- Additionally, transforming the process requires knowing that a set of references in one system for a customer, is part of the same ‘thing’ as a set of references in another system for that same customer.

Assume for a moment that you already have the knowledge of how these references relate to each other. How then would you record the relationship between the set of references in one system, to the set of references in multiple other systems, and – here’s the genius – contextualise it as the order, provisioning and billing of product X for customer Smith?

- That’s the Key Management System – a keymaker program that records the mappings across systems.

- It takes collaboration with SMEs to derive the knowledge necessary to map equivalences between systems. The key management system helps record this knowledge so it is easily accessible to every subsequent consumer and use case.

- It can need a reference data management solution and stewardship community to keep complex mappings2 up to date.

- When contextualising these keys into processes, data architecture and modelling principles are essential to accurately conform concepts and ensuring reusability and extensibility.

Critically, as you begin propagating these keys as foreign keys in your database, you’ll need to know that you can rebuild your keys identically each time. This avoids breaking foreign key integrity in recovery events (from data corruptions or upstream data issues).

Taxonomy wise, I’ve also now heard this keymaker program referred to as:

- A key store – not to be mistaken with certificate storage for websites

- Vertical integration of systems (vertically lining up systems by the thing that drives their commonality)

We expect that the need for key maker programs will crop up more often, as organisations digitise and link up a multitude of systems for an increasing number of data products.

It’s a good idea to architect your keymaker program deliberately – perhaps as part of your architectural runway – as it will form the foundation for your data quality and help ensure your data products are fit for purpose.

_footnotes

1 When your workforce maintains the referential integrity between systems. Tends to be more effective when activity volumes are quite low.

2 Rely on a stewardship community or better yet, develop a database of record to manage these mappings. The DBoR is far more sophisticated, sustainable and extendable, and correspondingly, a far larger overall investment.